Hyper Flow 971991551 Neural Node

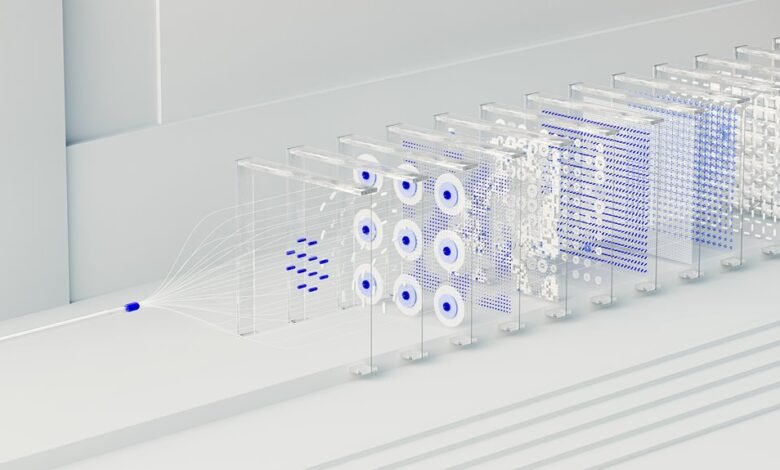

The Hyper Flow 971991551 Neural Node presents a modular unit that encodes patterns as discrete modules and adapts with localized signals. Its architecture favors plug-and-play scalability, enabling organic growth without rigid wiring. In real-time contexts, coordination among neighboring nodes supports dynamic resource allocation and fault tolerance. The result is energy-efficient inference amid evolving environments, though questions remain about limits of modular coordination and the boundaries of performance. This invites closer examination of how such nodes sustain coherence at scale.

What Is the Hyper Flow 971991551 Neural Node?

The Hyper Flow 971991551 Neural Node is a conceptual component designed to model complex information processing within a broader neural-inspired architecture. It functions as a modular unit that encodes patterns, adapts via localized learning signals, and interfaces with neighboring nodes.

Hyper Flow emerges as a coordinative dynamic, while Neural Node provides structured, scalable pathways for adaptive cognition and flexible information routing.

How the Modular Node Design Enables Plug-and-Play Scalability

Modular node design enables plug-and-play scalability by decoupling functional units from their wiring, allowing components to be added, replaced, or reconfigured without disrupting the entire system.

This architecture supports scalable interconnectivity, enabling networks to grow organically while preserving performance boundaries.

Through modular orchestration, emergent workflows form without heavy reengineering, fostering freedom-driven experimentation and robust, adaptable system design.

Real-Time Optimization and Resilient Fault Tolerance in Action

Real-time optimization and resilient fault tolerance emerge as the operational core when modular node ecosystems scale from modular assembly to active, self-regulating networks.

Observers note continuous adaptation, where feedback loops maintain performance under variable loads.

Real time optimization channels resources efficiently, while resilient fault tolerance ensures continuity despite partial failures.

The result is robust autonomy, enabling flexible, freedom-oriented deployments with predictable outcomes.

Energy-Efficient Inference for Dynamic Environments

Energy-efficient inference in dynamic environments requires models that adapt computational effort to shifting input characteristics without sacrificing accuracy. The approach assesses variability, allocating resources where needed while preserving core performance.

Techniques combine adaptive pruning, conditional computation, and throughput-aware scheduling. This balance achieves energy efficiency and robustness, enabling resilient operation across dynamic environments, where flexibility and precision cohere to sustain reliable inference.

Conclusion

The Hyper Flow 971991551 Neural Node represents a modular, self-organizing unit that localizes learning while enabling global coordination. Its plug-and-play design supports scalable growth without compromising performance, and its real-time feedback mechanisms foster resilient fault tolerance and adaptive resource allocation. In dynamic environments, energy-efficient inference emerges as a natural consequence of localized processing and streamlined inter-node communication. Viewed in concert, these nodes function as a well-oiled machine, turning complexity into opportunity and steering the system toward sustained equilibrium. All told, a rising tide lifts all boats.